Recently I have been assessing the cyber security of Connected Experiences in Microsoft 365. This post shares what I have learned and what I reviewed.

Interpreting Microsoft's Connected Experiences Documentation

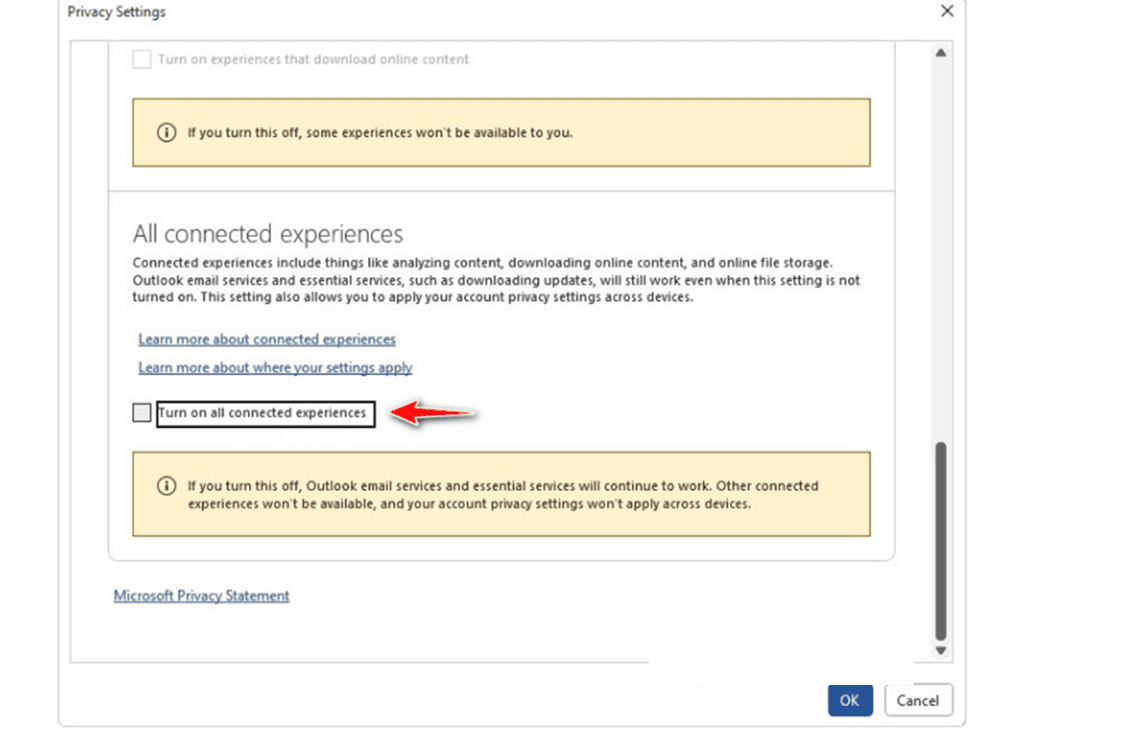

The first step was to review Microsoft's own documentation on Connected Experiences. Microsoft coined the term "connected experiences" to refer to features in Microsoft 365 apps which uses cloud-based services to provide enhanced functionality (such as processing your data or downloading external content). Microsoft connected experiences are enabled in Microsoft 365 by default. It can be opted out from settings on each app.

There are both required and optional connected experiences. Required connected experiences are core cloud-enabled functions which are needed to operating. These include functions related to licencing and activation, file synchronisation with OneDrive/SharePoint, co-authoring, autosave, settings roaming, and core spellcheck. From a security perspective, the required connected experiences cannot be disabled, are considered fundamental for Microsoft 365 environments, and still involve data exchange with Microsoft cloud.

Some examples of optional connected experiences in Microsoft 365 are:

PowerPoint Designer: providing design recommendations to your slide deck.

Dictation in Microsoft Word: transcribing audio voice recording to text.

Translator: translation from one language to another.

Downloading content like templates, 3D models, videos, Microsoft 365 help, etc.

The optional connected experiences can be disabled by enterprise policy, and they are broken down into experiences that analyse content, and experiences that download online content. For the experiences that analyse content, from a security perspective, user generated data is uploaded to Microsoft's cloud (audio, text, documents, segments of content). Data can be processed by Microsoft AI/ML pipelines, content may be stored temporarily to generate output, some services may use offshore regions unless otherwise enforced by tenancy data residency rules. Administrators can disable all such features via Group Policy or cloud policy. For the experiences that download online content, there is minimal risk (as no content is uploaded), mainly outbound retrieval of Microsoft-hosted assets, and are considered often acceptable even in restricted environments.

Let's take an example of Word transcription. A user either records audio or uploads an audio file within Microsoft Word and uses the Dictate feature. That audio is uploaded to Microsoft cloud. Then speech-to-text processing occurs on Microsoft servers, and the output transcript is returned to the local Word client. The audio is stored on the user's OneDrive unless manually deleted. Microsoft states that processing is temporary and files are not stored on their servers (not used to train AI unless users opt in).

Of relevance to security, it's worth noting the sensitivity of the audio, and that it may leave the device and be processed externally. You must verify where transcription processing occurs geographically (Microsoft documentation is quite vague on the jurisdiction of where "speech services" are handled). Controls also exist to fully disable transcription via privacy policies.

Administrative controls are provided by Microsoft for Optional Connected Experiences:

Allow the use of connected experiences in Office

Allow the use of connected experiences that analyse content

Allow the use of connected experiences that download content

These controls can be configured in group policy, Office cloud policy service, or the Microsoft 365 Apps admin centre. In our example, if we selected to block "experiences that analyse content", that would block transcription.

Key security questions to consider are:

Where does Microsoft perform transcription processing? AU/EU/US?

Should users be allowed to record sensitive audio for transcription? The default stance should be no, unless data sovereignty can be confirmed.

What metadata is being created when audio is being saved to OneDrive? (timestamps, file names, participants, etc.)

What is the risk surface? Cloud processing of user generated content, identity linkages, stored audio files.

Can Word Transcription be enabled for specific user groups?

If the risk is considered too high, evaluate if other tools are more well suited to the task, or consider Microsoft Purview protections.